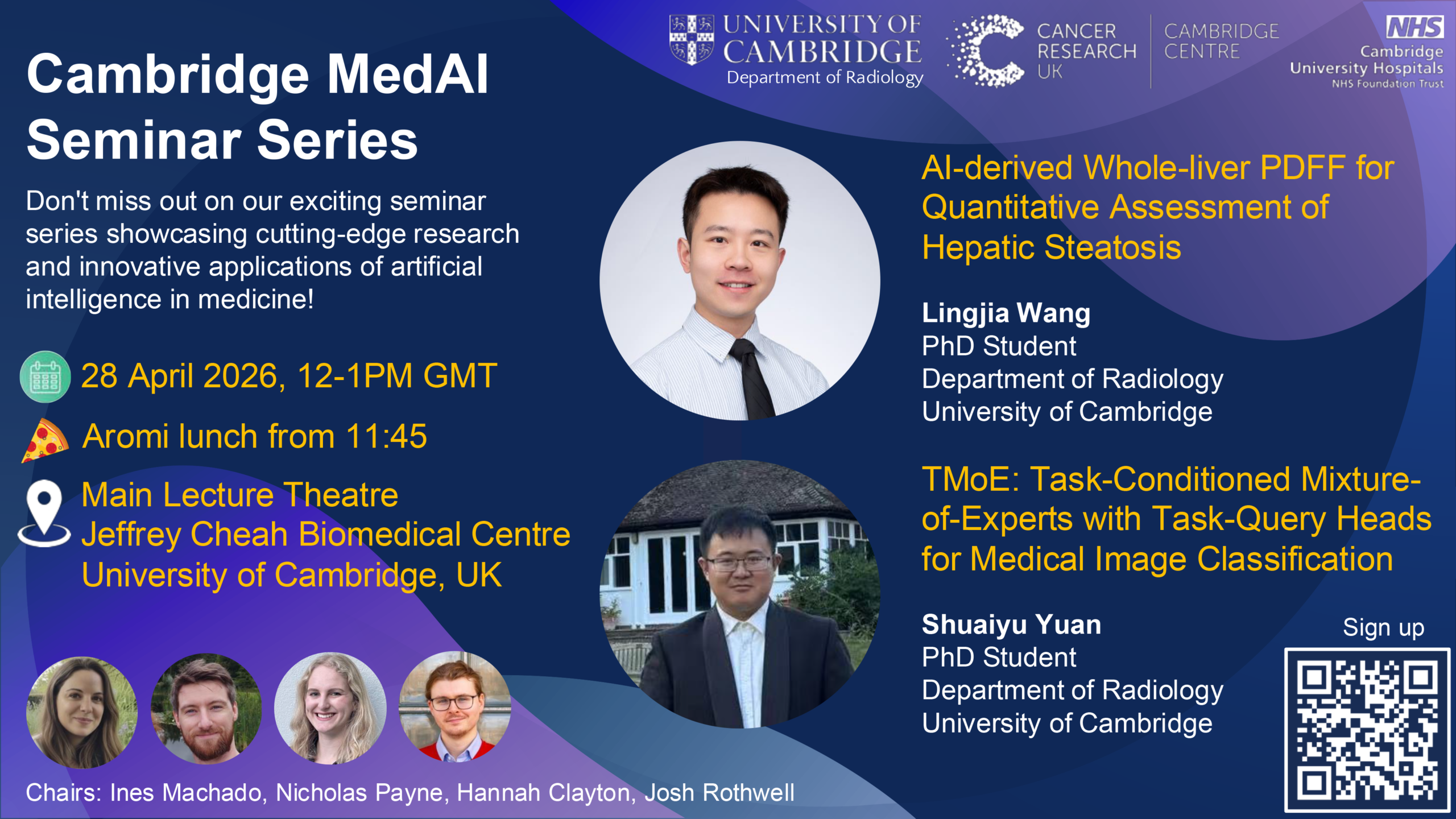

This month’s seminar will be held on Thursday 28 March 2026, 12-1pm at the Jeffrey Cheah Biomedical Centre (Main Lecture Theatre), University of Cambridge and streamed online via Zoom.

A light lunch from Aromi will be served from 11:45.

This is the Eventbrite link to sign up.

The event will feature the following talks:

AI-derived Whole-liver PDFF for Quantitative Assessment of Hepatic Steatosis – Lingjia Wang, PhD Candidate, Department of Radiology, University of Cambridge

Lingjia is a second-year PhD candidate in the Department of Radiology at the University of Cambridge, working on AI-based disease prediction and opportunistic screening in CT and MRI. Lingjia’s research particularly focuses on translating automated imaging biomarkers into PACS-integrated clinical workflows.

Abstract: Hepatic steatosis exhibits spatially heterogeneous fat distribution that may be incompletely captured by routine region-of-interest (ROI)–based proton density fat fraction (PDFF) measurements. This retrospective study evaluated an AI-derived whole-liver PDFF framework in 556 abdominal liver MRI examinations (2023–2025). Automated whole-liver and Couinaud segment masks were generated using TotalSegmentator on 3D Dixon images and applied to PDFF maps, excluding voxels <0% or >55%. Agreement between whole-liver and radiologist-reported ROI PDFF was assessed using Bland–Altman analysis, and intrahepatic fat heterogeneity was analysed across Couinaud segments. Whole-liver PDFF showed good agreement with ROI PDFF (mean bias −0.35%; limits of agreement −5.48% to +4.78%). Segmental analysis demonstrated greater variability and lower mean PDFF in the left lobe compared with the right lobe. AI-derived whole-liver PDFF provides a standardised assessment of hepatic fat and captures spatial heterogeneity beyond single-ROI measurements.

TMoE: Task-Conditioned Mixture-of-Experts with Task-Query Heads for Medical Image Classification – Shuaiyu Yuan, PhD student, Department of Radiology, University of Cambridge

Abstract: Medical image classification can cover heterogeneous datasets from various modalities, dimensionalities, anatomical focuses and di agnostic tasks. While training separate models is costly, performing a combined supervised learning with a fully shared backbone often suffers from negative transfer and limited feature extraction capacity. We pro pose TMoE, a Task-Conditioned Mixture-of-Experts with Task-Query Heads for multi-task medical image classification. TMoE augments a vi sion Transformer backbone by replacing feed-forward networks (FFN) in selected Transformer blocks with sparse MoE layers. A Top-k router, conditioned on a learned task embedding, dispatches tokens to subsets of experts, enabling task-dependent capacity allocation. We also intro duce task-query heads, where a per-task learned query performs cross attention over backbone tokens to produce a task-specific representation, followed by a lightweight task-specific classifier.